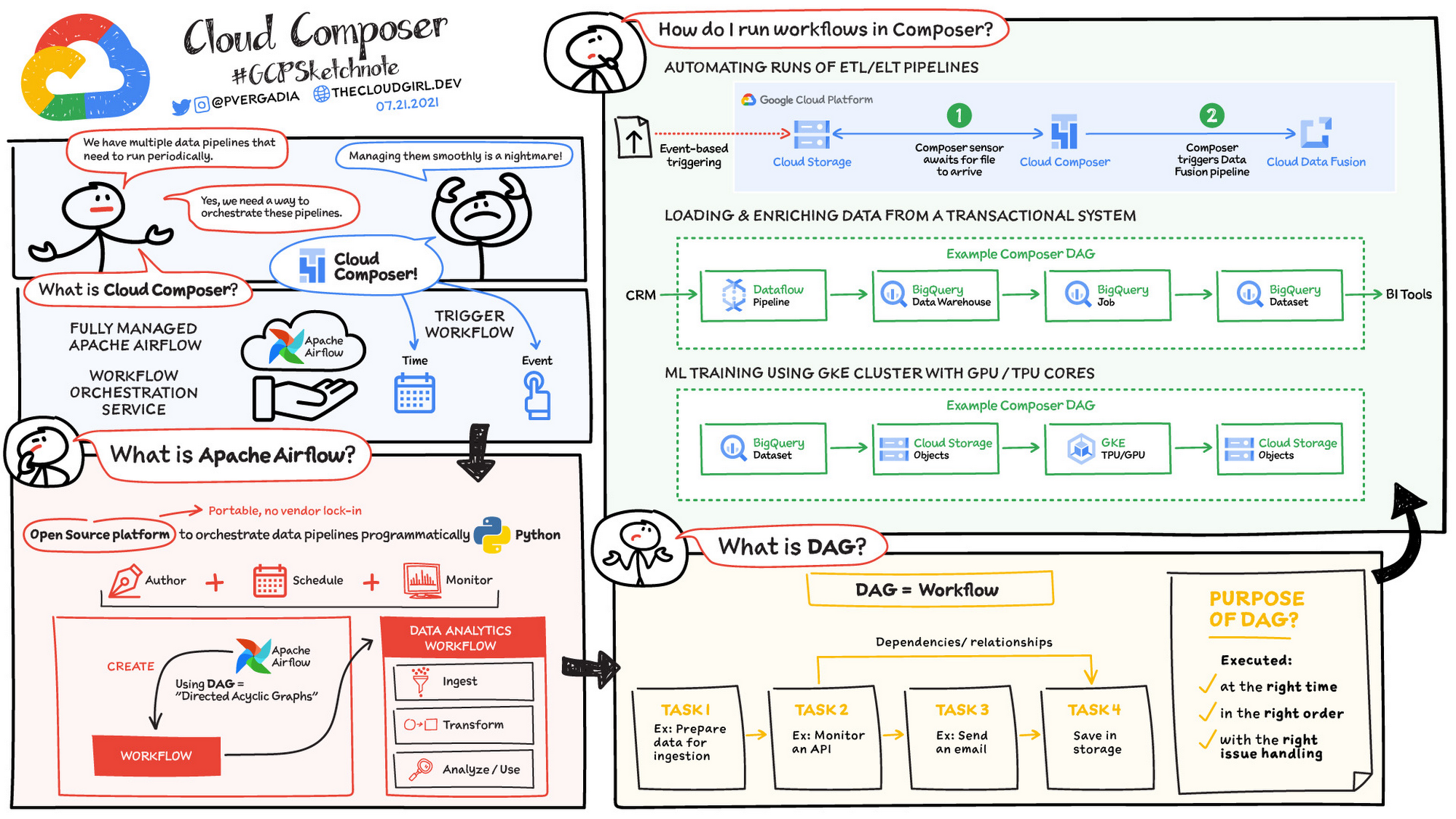

Smart Sensors: Sensors are the special kind of operator that checks particular tasks’ final state before moving to the next one.Users can also see tree/graph representations of various tasks and DAGs. The REST API provides XCom variables DAG runs, schedulers, graphs, and success/failure messages. With Airflow 2.0 comprehensive REST interface, users can now check and manage DAGs, triggers, and task instances. Full Rest API: Earlier in Airflow, engineers used the Experimental UI to trigger the DAGs.Hence, upgrading to multi-scheduler increases the performance scalability and provides high cluster availability. Single Scheduler in earlier versions of Airflow was more prone to a single point of failure. Redesigned Scheduler: Airflow 2.0 has switched from a single scheduler to a new multi scheduler.With the newest version, 2.0, Apache Airflow comes loaded with many new features and designs, making it a perfect scheduler tool for industries. Pure Python: Apache Airflow is pure Python, and therefore it allows users to use any library that exists in Python and build workflow pipelines.A user with a little programming background in Python can use Airflow. Easy to Use: Apache Airflow is relatively easy to use.Scalable: Apache Airflow has a scalable architecture, and it uses message queues to communicate with workers.Apache Airflow has an exquisite UI on which users can monitor progress, schedule jobs, link tasks, etc. It uses Jinja as a templating engine that provides the utmost customization level. Elegant: Apache Airflow pipelines are lean and explicit.Extensible: Apache Airflow is built on Python it allows users to quickly define custom operators and executors by extending the libraries making Apache Airflow an extensible component.Users can write codes in Python to generate dependent pipelines. Dynamic: Apache Airflow pipelines are coded entirely on Python, allowing Dynamic pipeline generation based on DAGs and SubDAGs concept.Airflow can connect with multiple data sources and send alerts via email/notification about the Job’s status. It is widely used in Data Engineering practice, and it has an easy-to-navigate UI that shows dependencies, logs, job progress, task, successes/failures, and many more.Īirflow allows users to create workflows as Directed Acyclic Graphs (DAGs) of tasks tied together to create workflows.

Google Airflow Integration Step 5: Start AirflowĪpache Airflow is a popular Open-Source tool mainly used for monitoring and scheduling workflows.Google Airflow Integration Step 4: Open Firewall.Google Airflow Integration Step 3: Setting up Airflow.Google Airflow Integration Step 2: Install Apache Airflow.Google Airflow Integration Step 1: Create Compute Engine Instance.Simplify Your Data Warehouse Analysis Using Hevo’s No-code Data Pipeline.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed